Several years ago, I started dabbling in iOS app development. I had just left my job at Dreamworks Animation where my computer had 24GB of memory and 12 cores on a powerful CPU. If that wasn't enough for what I was doing, there was a whole render farm at my disposal. Going down to a slow, single core processor with a tiny amount of memory was a big jump (this was before Apple started using multicore processors in their tablets and phones). Throughout my life, when learning something new related to coding, I've implemented a variation of my Bounce program to learn that something. Check out my Bounce Coding series to learn more about the different iterations of Bounce. Some things that I wanted to learn about were multitouch gestures, sound and the limitations of mobile devices. It seemed like a perfect fit to reimagine Bounce as an iOS app.

After releasing Bounce 2: Notes about 5 years ago, I stopped further development. With the release of the iPhone 5S, the first mobile device with a 64-bit processor, the app broke. Due to the change in precision of one of the types used in the Chipmunk physics library on 64-bit devices, there was a mismatch with the types in my app. It didn't take much work to fix, but I never found the time until recently. The other day I rereleased the app on the App Store, so you can play with it on any of your iOS devices. I had a lot of fun developing Bounce and now that my kids are old enough to enjoy playing with it, I'm glad I found the time to get it working again. I hope you enjoy the app. Comment below if you want to know any specifics about the implementation or the app itself!

Here's the instructional video I put together for a Kickstarter 5 years ago with most of the app's functionality.

Features

I’ve released the source code for Bounce so anyone can see the inner workings. The following features are what I focused my attention on. In future posts, I can go into detail with specific code examples. Let me know what you’d like to know more about!

Bouncy

Everything about Bounce is bouncy. The core functionality is a 2D rigid body simulation using the Chipmunk physics library to make shapes bounce around the screen. Bounce takes it further than that, though. Spring equations are used everywhere, from the the vertices that define each shape to make them look bouncy, to the animation of the interface that pops up from the bottom, to the forces that connect your gestures to the shapes being moved by them.

All shapes squash and stretch when they collide to make everything feel especially bouncy.

Colorful, Glowy and Patterned

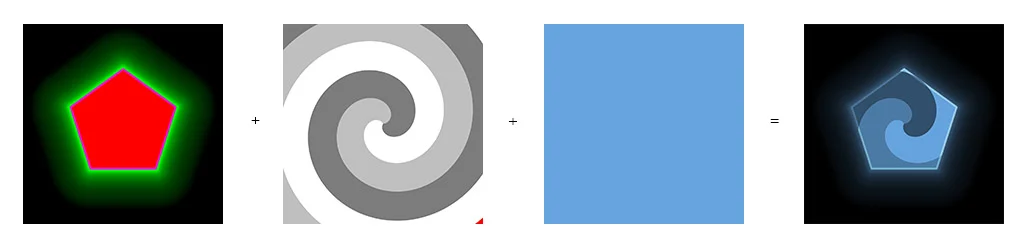

Each shape has a color, a pattern and a glow. Textures and GLSL shaders are used to render the shapes. Patterns and colors are assigned based on user selection and the glow is used throughout Bounce in various ways. Shapes glow when they collide and the intensity is based on the velocity of the collision before fading back out. The interface uses the glow to indicate an active status. Glow is also combined with bounciness to indicate a sudden change, such as the creation of a shape or the playing of a note.

GLSL shaders combine textures, color and glow intensity to get the final result.

Crisp

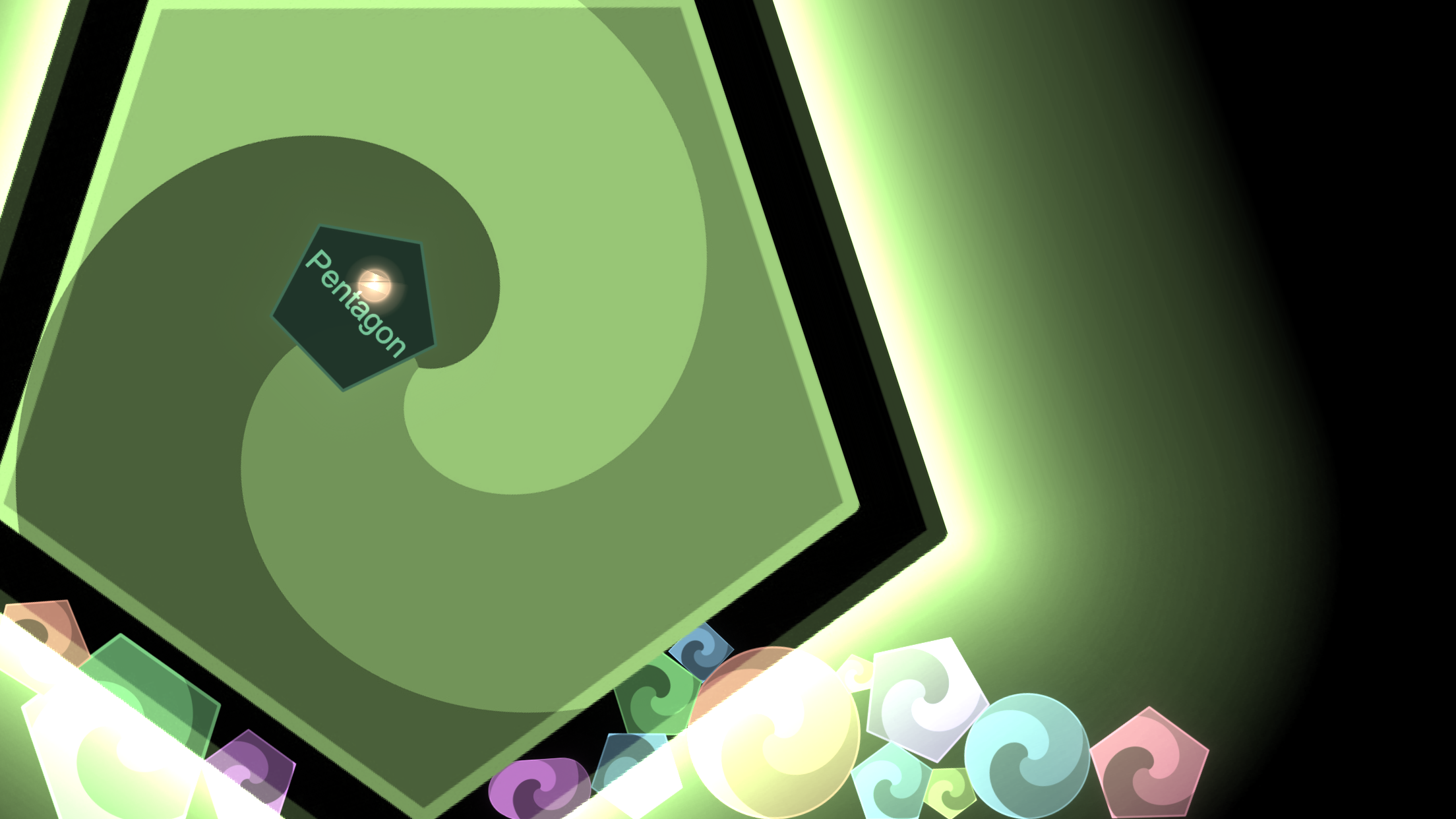

The textures used in Bounce are all very large to provide crisp visuals that take advantage of high definition retina displays. With so many shapes and patterns that can potentially take up the entire screen, this can really push the limits of mobile devices in terms of memory usage. If an app takes up too much memory, the OS issues a warning before finally killing the app if it doesn’t free up memory. Bounce loads high resolution textures on demand and frees them when memory is low, falling back to lower resolution textures.

Any shape or pattern can take up the whole screen, so textures must be very high resolution in those cases. Bounce intelligently loads and unloads textures on the fly to save memory.

Musical

Collisions in Bounce trigger musical notes of the currently selected chord. You have control over what chord is selected through the Bounce pane in the Notes tab, and even finer control in the Advanced tab by assigning specific notes. With so many collisions happening, the device can quickly become overwhelmed if every one produced a sound. Bounce uses Core Audio to be able to efficiently generate as many sounds as the device can handle.

Creating your own playable scales, play a single scale from the Bounce pane or just listen to the harmonies when shapes collide.

Multitouch

Bounce takes full advantage of the multitouch abilities of mobile devices. You can use as many fingers as your device allows (5 on iPhone and 11 on iPad). Gestures are analyzed and trigged as one finger taps, drags, flicks and holds, 2 finger pinches and 3 finger swipes from the sides of your device. These gestures have different effects based on what object or area of the screen receives the gesture.

Bounce can handle as many simultaneous touches as your device can handle (5 on iPhone and 11 on iPad).

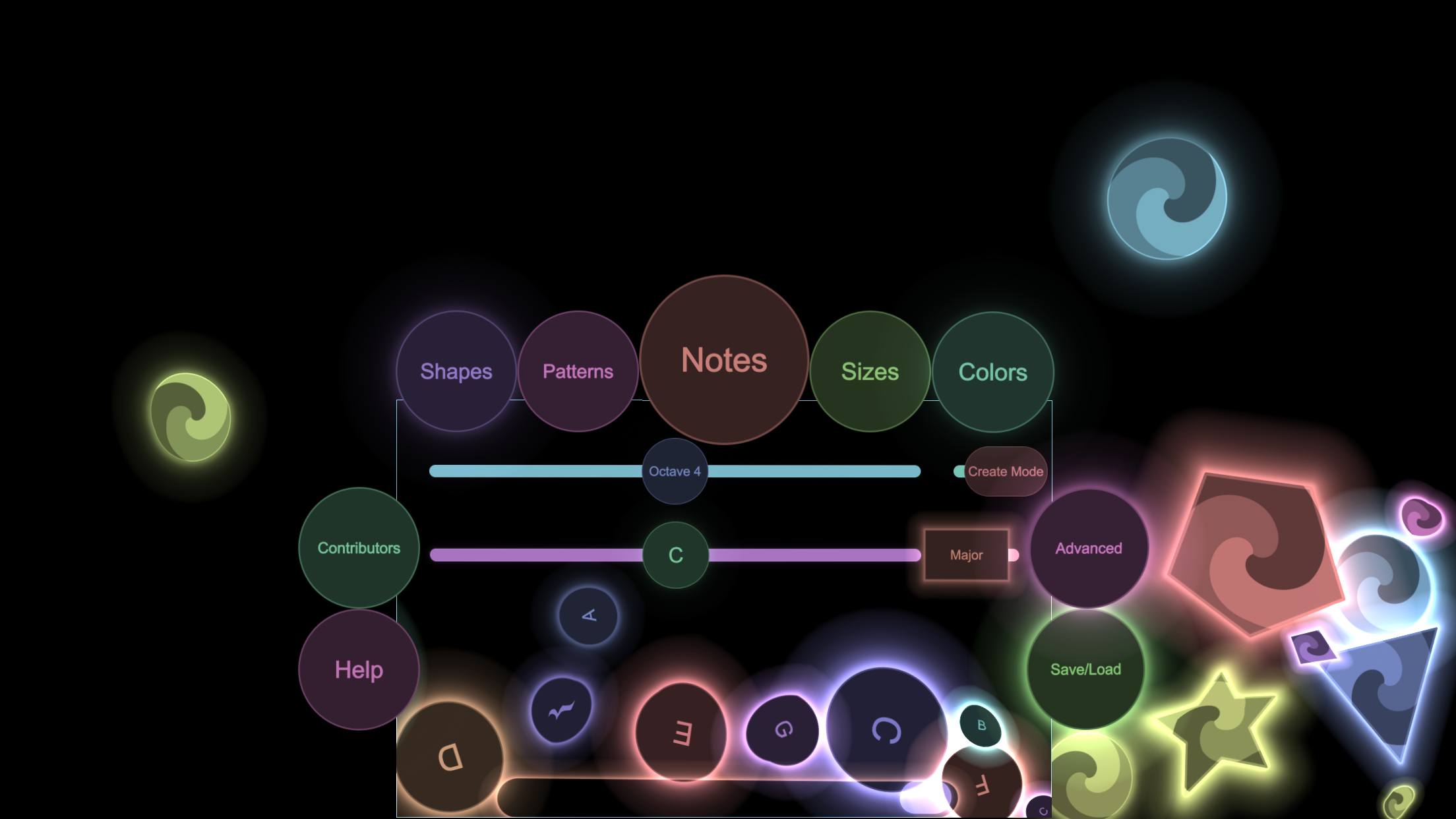

Custom UI

The UI is all custom made to use the same physics as the main simulation. Configuration shapes can be dragged out of the interface panes to assign features (shape, color, pattern, size and note) to other shapes, or dropped or thrown in open space to create a shape with that feature while inheriting the velocity of your finger. The interface pane itself interacts with the shapes in the main simulation (shapes will bounce off it and will send them flying when it first pops out). Buttons, sliders and configuration shapes all participate in their own simulations within the interface to give a unified Bounce experience.

The UI components are as bouncy and fun to play with as the rest of Bounce.

Thanks for taking a look. Let me know what you think in the comments below!